by: Bonnie Anderson

“[A] thermostat that automatically turns on the heat whenever the temperature in a room drops below 68°F is a good example of single-loop learning.

“[A] thermostat that automatically turns on the heat whenever the temperature in a room drops below 68°F is a good example of single-loop learning.

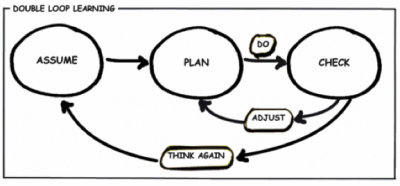

A thermostat that could ask, "why am I set to 68°F?" and then explore whether or not some other temperature might more economically achieve the goal of heating the room would be engaged in double-loop learning.1”

Innovation as a Learning Process

In his book, “The Lean Startup,” Eric Ries describes the innovative process in three stages: Build - Measure - Learn. He encourages entrepreneurs and organizations to engage in this continuous cycle of innovation by building a minimum viable product (MVP) or quick prototype for measuring the target user’s response and experience with the concept, and learning from this input to make incremental improvements. Babson College, the leading institution for entrepreneurship education, changes up the verbs to offer “Act, Learn, Build” as their trademark formula, known as “Entrepreneurial Thought and Action®”, for new venture creation and success.

Regardless of verb choice, the concept of user testing and incorporating feedback into future versions of a product or process is not necessarily new, nor is it unique to startup culture. In the early 1960s, organizational economists Richard Cyert and James March described a company’s growth in terms of changes based on feedback:

“An organization ... changes its behavior in response to short-run feedback from the environment according to some fairly well-defined rules. It changes rules in response to longer-run feedback according to more general rules, and so on.3”

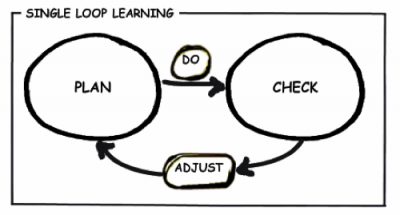

In the late 1970’s,organizational behaviorists Chris Argyris and Donald Schön gave this concept a name: The Learning Loop4. They distinguish two types; the Single Learning Loop is one in which something is planned, implemented, measured, and adjusted, and the Double Learning Loop extends this to evaluate the original assumptions that guided the planning5.

The Course Design Learning Loop

Here in the TLL, we consider the first-run of a learning experience to be our “live prototype”, though many sub-prototypes may have been built and tested leading up to that first offering. The debut of a new learning experience provides an excellent opportunity to get real-time data (both quantitative and qualitative) from actual users (including students, instructors, and administrative and support teams) about how the experience is received. Keeping pace with these data requires that course design teams meet at least weekly to review, discuss, and answer three key questions:

-

What needs to be changed immediately to improve the learning experience mid-stream?

-

How can we leverage the lessons we are learning to improve other experiences we are currently developing?

-

What opportunities are we discovering for ongoing improvements we can make to this learning experience before it is offered again?

We call these conversations “Learning Loop” meetings. A typical 60-minute learning loop meeting might use something like the following agenda:

- Focus: Last Session/Week (15 minutes)

- Notes: Student Feedback, Facilitator Feedback, Assessment Outcomes

- Focus: Current Session/Week (15 minutes)

- Notes: Engagement, Questions, Issues, Communication

- Focus: Upcoming Session/Week (15 minutes)

- Notes: What should we anticipate? What should learners expect?

- Focus: General (15 minutes)

- Notes: What else should be reviewed, discussed, or considered at this time?

This model allows us to examine our early assumptions about how a learner will interact with the material, with our facilitators, and with one another based on the actual results we encounter as the course unfolds in real-time. For example, when we designed a series of short-form learning experiences known as “modular learning assets,” or MLAs, we assumed that participants would value the opportunity to network with like-minded professionals via content-related discussion boards. While this proved true, feedback from participants and facilitators alike indicated that these boards were too busy; too many people were posting and the threads were difficult to follow. In the next offering, we divided participants into sections, such that no participant was in any one thread with more than fifty people. This still being a high number, we added the number of threads to which participants could respond, further segregating the conversations by specific areas of interest. In the next iteration, we will determine the effectiveness of this change.

Each learning experience we create represents a number of similar experiments to test an assumption, a theory, a new strategy, a new technology, etc. Such tests have become a core vehicle for the TLL’s pursuit of continuous improvement as related to our processes, collaborative efforts, and products. With this iterative approach to our work, we embrace the notion that learning never ends - not for students, not for instructors, and not for design teams. Instead, it loops back and forth, getting richer with each pass. Such is the meaning of the Learning Loop.

References

1 Argyris, Chris. "Teaching Smart People How to Learn." (1991).

2 Reis, Eric. "The lean startup." New York: Crown Business (2011).

3 Cyert, Richard M., and James G. March. "A Behavioral Theory of the Firm."Englewood Cliffs, NJ 2 (1963).

4 Argyris, Chris, and Donald A. Schön. Organizational learning: A theory of action perspective. Vol. 173. Reading, MA: Addison-Wesley, 1978.

5 Argyris, Chris. "Double‐Loop Learning." Wiley Encyclopedia of Management (2000).